Note

This page is a reference documentation. It only explains the class signature, and not how to use it. Please refer to the user guide for the big picture.

nilearn.maskers.MultiNiftiLabelsMasker¶

- class nilearn.maskers.MultiNiftiLabelsMasker(labels_img=None, labels=None, lut=None, background_label=0, mask_img=None, smoothing_fwhm=None, standardize=False, standardize_confounds=True, high_variance_confounds=False, detrend=False, low_pass=None, high_pass=None, t_r=None, dtype=None, resampling_target='data', memory=None, memory_level=1, verbose=0, strategy='mean', keep_masked_labels=False, reports=True, cmap='CMRmap_r', n_jobs=1, clean_args=None)[source]¶

Class for extracting data from multiple Niimg-like objects using labels of non-overlapping brain regions.

MultiNiftiLabelsMasker is useful when data from non-overlapping volumes and from different subjects should be extracted (contrary to

nilearn.maskers.NiftiLabelsMasker).For more details on the definitions of labels in Nilearn, see the Extraction of signals from regions: NiftiLabelsMasker, NiftiMapsMasker section.

- Parameters:

- labels_imgNiimg-like object or None, default=None

See Input and output: neuroimaging data representation. Region definitions, as one image of labels.

- labels

listofstror None, default=None Full labels corresponding to the labels image. This is used to improve reporting quality if provided.

Warning

The labels must be consistent with the label values provided through labels_img.

- lut

pandas.DataFrameorstrorpathlib.Pathto a TSV file or None, default=None Mutually exclusive with

labels. Act as a look up table (lut) with at least columns ‘index’ and ‘name’. Formatted according to ‘dseg.tsv’ format from BIDS.Warning

If a region exist in the atlas image but is missing from its associated LUT, a new entry will be added to the LUT during fit with the name “unknown”. Conversely, if regions listed in the LUT do not exist in the associated atlas image, they will be dropped from the LUT during fit.

- background_label

intorfloat, default=0 Label used in labels_img to represent background.

Warning

This value must be consistent with label values and image provided.

- mask_imgNiimg-like object or None, default=None

See Input and output: neuroimaging data representation. Mask to apply to regions before extracting signals.

- smoothing_fwhm

floatorintor None, optional. If smoothing_fwhm is not None, it gives the full-width at half maximum in millimeters of the spatial smoothing to apply to the signal.

- standardizeany of: ‘zscore_sample’, ‘zscore’, ‘psc’, True, False or None; default=False

Strategy to standardize the signal:

'zscore_sample': The signal is z-scored. Timeseries are shifted to zero mean and scaled to unit variance. Uses sample std.'psc': Timeseries are shifted to zero mean value and scaled to percent signal change (as compared to original mean signal).True: The signal is z-scored (same as option zscore). Timeseries are shifted to zero mean and scaled to unit variance.Deprecated since Nilearn 0.13.0: In nilearn version 0.15.0,

Truewill be replaced by'zscore_sample'.False: Do not standardize the data.Deprecated since Nilearn 0.13.0: In nilearn version 0.15.0,

Falsewill be replaced byNone.

Deprecated since Nilearn 0.13.0: The default will be changed to

Nonein version 0.15.0.- standardize_confounds

bool, default=True If set to True, the confounds are z-scored: their mean is put to 0 and their variance to 1 in the time dimension.

- high_variance_confounds

bool, default=False If True, high variance confounds are computed on provided image with

nilearn.image.high_variance_confoundsand default parameters and regressed out.- detrend

bool, optional Whether to detrend signals or not.

- low_pass

floatorintor None, default=None Low cutoff frequency in Hertz. If specified, signals above this frequency will be filtered out. If None, no low-pass filtering will be performed.

- high_pass

floatorintor None, default=None High cutoff frequency in Hertz. If specified, signals below this frequency will be filtered out.

- t_r

floatorintor None, default=None Repetition time, in seconds (sampling period). Set to None if not provided.

- dtypedtype like, “auto” or None, default=None

Data type toward which the data should be converted. If “auto”, the data will be converted to int32 if dtype is discrete and float32 if it is continuous. If None, data will not be converted to a new data type.

- resampling_target{“data”, “labels”, None}, default=”data”

Defines which image gives the final shape/size:

"data"means the atlas is resampled to the shape of the data if needed."labels"means that themask_imgand images provided tofit()are resampled to the shape and affine oflabels_img."None"means no resampling: if shapes and affines do not match, aValueErroris raised.

- memoryNone, instance of

joblib.Memory,str, orpathlib.Path, default=None Used to cache the masking process. By default, no caching is done. If a

stris given, it is the path to the caching directory.- memory_level

int, default=1 Rough estimator of the amount of memory used by caching. Higher value means more memory for caching. Zero means no caching.

- verbose

boolorint, default=0 Verbosity level (

0orFalsemeans no message).- strategy

str, default=”mean” The name of a valid function to reduce the region with. Must be one of: sum, mean, median, minimum, maximum, variance, standard_deviation.

- keep_masked_labels

bool, default=False When a mask is supplied through the “mask_img” parameter, some atlas regions may lie entirely outside of the brain mask, resulting in empty time series for those regions. If True, the masked atlas with these empty labels will be retained in the output, resulting in corresponding time series containing zeros only. If False, the empty labels will be removed from the output, ensuring no empty time series are present.

Deprecated since Nilearn 0.10.2.

Changed in Nilearn 0.13.0: The

keep_masked_labelsparameter will be removed in 0.15.- reports

bool, default=True If set to True, data is saved in order to produce a report.

- cmap

matplotlib.colors.Colormap, orstr, optional The colormap to use. Either a string which is a name of a matplotlib colormap, or a matplotlib colormap object. default=”CMRmap_r” Only relevant for the report figures.

- n_jobs

int, default=1 The number of CPUs to use to do the computation. -1 means ‘all CPUs’.

- clean_args

dictor None, default=None Keyword arguments to be passed to

cleancalled within the masker. Withinclean, kwargs prefixed with'butterworth__'will be passed to the Butterworth filter.

- Attributes:

- clean_args_

dict Keyword arguments to be passed to

cleancalled within the masker. Withinclean, kwargs prefixed with'butterworth__'will be passed to the Butterworth filter.- labels_img_

nibabel.nifti1.Nifti1Image The labels image.

- lut_

pandas.DataFrame Look-up table derived from the

labelsorlutor from the values of the label image.- mask_img_A 3D binary

nibabel.nifti1.Nifti1Imageor None. The mask of the data. If no

mask_imgwas passed at masker construction, thenmask_img_isNone, otherwise is the resulting binarized version ofmask_imgwhere each voxel isTrueif all values across samples (for example across timepoints) is finite value different from 0.- memory_joblib memory cache

- clean_args_

- __init__(labels_img=None, labels=None, lut=None, background_label=0, mask_img=None, smoothing_fwhm=None, standardize=False, standardize_confounds=True, high_variance_confounds=False, detrend=False, low_pass=None, high_pass=None, t_r=None, dtype=None, resampling_target='data', memory=None, memory_level=1, verbose=0, strategy='mean', keep_masked_labels=False, reports=True, cmap='CMRmap_r', n_jobs=1, clean_args=None)[source]¶

- background_label¶

- fit(imgs=None, y=None)[source]¶

Compute the mask corresponding to the data.

- Parameters:

- imgs

listof Niimg-like objects or None, default=None See Input and output: neuroimaging data representation. Data on which the mask must be calculated. If this is a list, the affine is considered the same for all.

- yNone

This parameter is unused. It is solely included for scikit-learn compatibility.

- imgs

- fit_transform(imgs, y=None, confounds=None, sample_mask=None, **fit_params)[source]¶

Fit to data, then transform it.

- Parameters:

- imgsImage object, or a

listof Image objects See Input and output: neuroimaging data representation. Data to be preprocessed

- yNone

This parameter is unused. It is solely included for scikit-learn compatibility.

- confounds

listof confounds, default=None List of confounds (arrays, dataframes, str or path of files loadable into an array). As confounds are passed to

nilearn.signal.clean, please see the related documentation for details about accepted types. Must be of same length than imgs.- sample_mask

listof sample_mask, default=None List of sample_mask (any type compatible with numpy-array indexing) to use for scrubbing outliers. Must be of same length as

imgs.shape = (total number of scans - number of scans removed)for explicit index (for example,sample_mask=np.asarray([1, 2, 4])), orshape = (number of scans)for binary mask (for example,sample_mask=np.asarray([False, True, True, False, True])). Masks the images along the last dimension to perform scrubbing: for example to remove volumes with high motion and/or non-steady-state volumes. This parameter is passed tonilearn.signal.clean.Added in Nilearn 0.8.0.

- imgsImage object, or a

- Returns:

- signals

listofnumpy.ndarrayornumpy.ndarray Signal for each voxel. Output shape for :

3D images: (number of elements,) array

4D images: (number of scans, number of elements) array

list of 3D images: list of (number of elements,) array

list of 4D images: list of (number of scans, number of elements) array

- signals

- generate_report(engine='matplotlib', title=None, **kwargs)[source]¶

Generate an HTML report for this masker.

The

HTMLReportcan be opened in a browser, displayed in a notebook, or saved to disk as a standalone HTML file.Note

This functionality requires to have

Matplotlibinstalled.- Parameters:

- engine

str, default=”matplotlib” Choice of engine to display report figures.

All maskers support

"matplotlib"as engine.Other options are :

"brainsprite":NiftiMasker, MultiNiftiMasker, NiftiLabelsMasker, MultiNiftiLabelsMasker:

"plotly":SurfaceMasker, MultiSurfaceMasker, SurfaceLabelsMasker, MultiSurfaceLabelsMasker, SurfaceMapsMasker, MultiSurfaceMapsMasker:

- title

stror None, default=None title for the report. If None, title will be the class name.

- kwargs

dict[str, Any] Dictionary of key-word arguments necessary for report generation.

Expected keys depending on masker type are:

For NiftiMapsMasker, MultiNiftiMapsMasker, SurfaceMapsMasker, MultiSurfaceMapsMasker :

- displayed_maps

int,ndarrayorlistofint, or “all”, default=10 Indicates which maps will be displayed in the HTML report.

If

"all": All maps will be displayed in the report.

masker.generate_report("all")

masker.generate_report([6, 3, 12])

- If an

int: This will only display the first n maps, n being the value of the parameter. By default, the report will only contain the first 10 maps. Example to display the first 16 maps:

- If an

masker.generate_report(16)

For NiftiSpheresMasker :

- displayed_spheres

int,ndarrayorlistofint, or “all”, default=10 Indicates which spheres will be displayed in the HTML report.

If

"all": All spheres will be displayed in the report.

masker.generate_report("all")

masker.generate_report([6, 3, 12])

- If an

int: This will only display the first n spheres, n being the value of the parameter. By default, the report will only contain the first 10 spheres. Example to display the first 16 spheres:

- If an

masker.generate_report(16)

- engine

- Returns:

- reportnilearn.reporting.HTMLReport

HTML report for the masker.

- get_feature_names_out(input_features=None)[source]¶

Get output feature names for transformation.

- Parameters:

- input_features :default=None

Only for sklearn API compatibility.

- get_metadata_routing()¶

Get metadata routing of this object.

Please check User Guide on how the routing mechanism works.

- Returns:

- routingMetadataRequest

A

MetadataRequestencapsulating routing information.

- get_params(deep=True)¶

Get parameters for this estimator.

- Parameters:

- deepbool, default=True

If True, will return the parameters for this estimator and contained subobjects that are estimators.

- Returns:

- paramsdict

Parameter names mapped to their values.

- inverse_transform(signals)[source]¶

Compute voxel signals from region signals.

Any mask given at initialization is taken into account.

Changed in Nilearn 0.9.2: This method now supports 1D arrays, which will produce 3D images.

- Parameters:

- signals1D/2D

numpy.ndarray Extracted signal. If a 1D array is provided, then the shape should be (number of elements,). If a 2D array is provided, then the shape should be (number of scans, number of elements).

- signals1D/2D

- Returns:

- img

nibabel.nifti1.Nifti1Image Transformed image in brain space. Output shape for :

1D array : 3D

nibabel.nifti1.Nifti1Imagewill be returned.2D array : 4D

nibabel.nifti1.Nifti1Imagewill be returned.

- img

- property labels_¶

Return list of labels of the regions.

The background label is included if present in the image.

- lut_¶

- property n_elements_¶

Return number of regions.

This is equal to the number of unique values in the fitted label image, minus the background value.

- property region_ids_¶

Return dictionary containing the region ids corresponding to each column in the array returned by transform.

The region id corresponding to

region_signal[:,i]isregion_ids_[i].region_ids_['background']is the background label.

- property region_names_¶

Return a dictionary containing the region names corresponding to each column in the array returned by transform.

The region names correspond to the labels provided in labels in input. The region name corresponding to

region_signal[:,i]isregion_names_[i].

- set_fit_request(*, imgs='$UNCHANGED$')¶

Request metadata passed to the

fitmethod.Note that this method is only relevant if

enable_metadata_routing=True(seesklearn.set_config). Please see User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed tofitif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it tofit.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.Added in version 1.3.

Note

This method is only relevant if this estimator is used as a sub-estimator of a meta-estimator, e.g. used inside a

Pipeline. Otherwise it has no effect.- Parameters:

- imgsstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

imgsparameter infit.

- Returns:

- selfobject

The updated object.

- set_inverse_transform_request(*, signals='$UNCHANGED$')¶

Request metadata passed to the

inverse_transformmethod.Note that this method is only relevant if

enable_metadata_routing=True(seesklearn.set_config). Please see User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed toinverse_transformif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it toinverse_transform.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.Added in version 1.3.

Note

This method is only relevant if this estimator is used as a sub-estimator of a meta-estimator, e.g. used inside a

Pipeline. Otherwise it has no effect.- Parameters:

- signalsstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

signalsparameter ininverse_transform.

- Returns:

- selfobject

The updated object.

- set_output(*, transform=None)[source]¶

Set the output container when

"transform"is called.Warning

This has not been implemented yet.

- set_params(**params)¶

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as

Pipeline). The latter have parameters of the form<component>__<parameter>so that it’s possible to update each component of a nested object.- Parameters:

- **paramsdict

Estimator parameters.

- Returns:

- selfestimator instance

Estimator instance.

- set_transform_request(*, confounds='$UNCHANGED$', imgs='$UNCHANGED$', sample_mask='$UNCHANGED$')¶

Request metadata passed to the

transformmethod.Note that this method is only relevant if

enable_metadata_routing=True(seesklearn.set_config). Please see User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed totransformif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it totransform.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.Added in version 1.3.

Note

This method is only relevant if this estimator is used as a sub-estimator of a meta-estimator, e.g. used inside a

Pipeline. Otherwise it has no effect.- Parameters:

- confoundsstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

confoundsparameter intransform.- imgsstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

imgsparameter intransform.- sample_maskstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_maskparameter intransform.

- Returns:

- selfobject

The updated object.

- transform(imgs, confounds=None, sample_mask=None)[source]¶

Apply mask, spatial and temporal preprocessing.

- Parameters:

- imgs :Image object, or a :obj:`list` of Image objects

See Input and output: neuroimaging data representation. Data to be preprocessed

- confounds

listof confounds, default=None List of confounds (arrays, dataframes, str or path of files loadable into an array). As confounds are passed to

nilearn.signal.clean, please see the related documentation for details about accepted types. Must be of same length than imgs.- sample_mask

listof sample_mask, default=None List of sample_mask (any type compatible with numpy-array indexing) to use for scrubbing outliers. Must be of same length as

imgs.shape = (total number of scans - number of scans removed)for explicit index (for example,sample_mask=np.asarray([1, 2, 4])), orshape = (number of scans)for binary mask (for example,sample_mask=np.asarray([False, True, True, False, True])). Masks the images along the last dimension to perform scrubbing: for example to remove volumes with high motion and/or non-steady-state volumes. This parameter is passed tonilearn.signal.clean.

- Returns:

- signals

listofnumpy.ndarrayornumpy.ndarray Signal for each voxel. Output shape for :

3D images: (number of elements,) array

4D images: (number of scans, number of elements) array

list of 3D images: list of (number of elements,) array

list of 4D images: list of (number of scans, number of elements) array

- signals

- transform_imgs(imgs_list, confounds=None, n_jobs=1, sample_mask=None)[source]¶

Extract signals from a list of 4D niimgs.

- Parameters:

- imgs

listof Niimg-like objects See Input and output: neuroimaging data representation. Images to process.

- confounds

listof confounds, default=None List of confounds (arrays, dataframes, str or path of files loadable into an array). As confounds are passed to

nilearn.signal.clean, please see the related documentation for details about accepted types. Must be of same length than imgs.- n_jobs

int, default=1 The number of CPUs to use to do the computation. -1 means ‘all CPUs’.

- sample_mask

listof sample_mask, default=None List of sample_mask (any type compatible with numpy-array indexing) to use for scrubbing outliers. Must be of same length as

imgs.shape = (total number of scans - number of scans removed)for explicit index (for example,sample_mask=np.asarray([1, 2, 4])), orshape = (number of scans)for binary mask (for example,sample_mask=np.asarray([False, True, True, False, True])). Masks the images along the last dimension to perform scrubbing: for example to remove volumes with high motion and/or non-steady-state volumes. This parameter is passed tonilearn.signal.clean.

- imgs

- Returns:

- signals

listofnumpy.ndarray Signal for each voxel. Output shape for :

list of 3D images: list of (number of elements,) array

list of 4D images: list of (number of scans, number of elements) array

- signals

- transform_single_imgs(imgs, confounds=None, sample_mask=None)[source]¶

Extract signals from a single 4D niimg.

- Parameters:

- imgs3D/4D Niimg-like object

See Input and output: neuroimaging data representation. Images to process.

- confounds

numpy.ndarray,str,pathlib.Path,pandas.DataFrameorlistof confounds timeseries, default=None This parameter is passed to

nilearn.signal.clean. Please see the related documentation for details. shape: (number of scans, number of confounds)- sample_maskAny type compatible with numpy-array indexing, default=None

shape = (total number of scans - number of scans removed)for explicit index (for example,sample_mask=np.asarray([1, 2, 4])), orshape = (number of scans)for binary mask (for example,sample_mask=np.asarray([False, True, True, False, True])). Masks the images along the last dimension to perform scrubbing: for example to remove volumes with high motion and/or non-steady-state volumes. This parameter is passed tonilearn.signal.clean.Added in Nilearn 0.8.0.

- Attributes:

- region_atlas_Niimg-like object

Regions definition as labels. The labels correspond to the indices in

region_ids_. The region inregion_atlas_that takes the valueregion_ids_[i]is used to compute the signal inregion_signal[:, i].Added in Nilearn 0.10.3.

- Returns:

- signals

numpy.ndarray,pandas.DataFrameor polars.DataFrame Signal for each element.

Changed in Nilearn 0.13.0: Added

set_outputsupport.The type of the output is determined by

set_output(): see the scikit-learn documentation.Output shape for :

For Numpy outputs:

3D images: (number of elements,)

4D images: (number of scans, number of elements) array

For DataFrame outputs:

3D or 4D images: (number of scans, number of elements) array

- signals

Examples using nilearn.maskers.MultiNiftiLabelsMasker¶

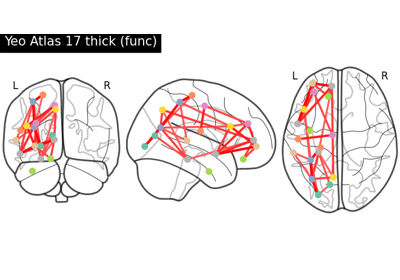

Comparing connectomes on different reference atlases