Note

Go to the end to download the full example code. or to run this example in your browser via Binder

A introduction tutorial to fMRI decoding¶

Here is a simple tutorial on decoding with nilearn. It reproduces the Haxby et al.[1] study on a face vs cat discrimination task in a mask of the ventral stream.

This tutorial is meant as an introduction to the various steps of a decoding

analysis using Nilearn meta-estimator: Decoder

It is not a minimalistic example, as it strives to be didactic. It is not meant to be copied to analyze new data: many of the steps are unnecessary.

import warnings

warnings.filterwarnings(

"ignore", message="The provided image has no sform in its header."

)

Retrieve and load the fMRI data from the Haxby study¶

First download the data¶

The fetch_haxby function will download the

Haxby dataset if not present on the disk, in the nilearn data directory.

It can take a while to download about 310 Mo of data from the Internet.

from nilearn import datasets

# By default 2nd subject will be fetched

haxby_dataset = datasets.fetch_haxby()

# 'func' is a list of filenames: one for each subject

fmri_filename = haxby_dataset.func[0]

# print basic information on the dataset

print(f"First subject functional nifti images (4D) are at: {fmri_filename}")

[fetch_haxby] Dataset created in /home/runner/nilearn_data/haxby2001

[fetch_haxby] Downloading data from

https://www.nitrc.org/frs/download.php/7868/mask.nii.gz ...

[fetch_haxby] ...done. (0 seconds, 0 min)

[fetch_haxby] Downloading data from

http://data.pymvpa.org/datasets/haxby2001/MD5SUMS ...

[fetch_haxby] ...done. (0 seconds, 0 min)

[fetch_haxby] Downloading data from

http://data.pymvpa.org/datasets/haxby2001/subj2-2010.01.14.tar.gz ...

[fetch_haxby] Downloaded 163086336 of 291168628 bytes (56.0%%, 00 HR 00 MIN 01

SEC remaining)

[fetch_haxby] ...done. (2 seconds, 0 min)

[fetch_haxby] Extracting data from /home/runner/nilearn_data/haxby2001/9cabe0680

89e791ef0c5fe930fc20e30/subj2-2010.01.14.tar.gz...

[fetch_haxby] .. done.

First subject functional nifti images (4D) are at: /home/runner/nilearn_data/haxby2001/subj2/bold.nii.gz

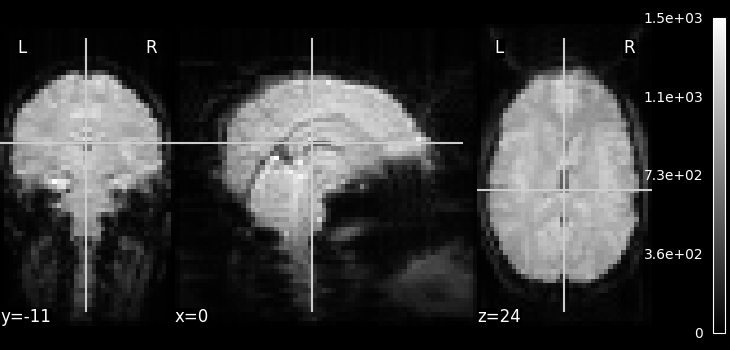

Visualizing the fMRI volume¶

One way to visualize a fMRI volume is

using plot_epi.

We will visualize the previously fetched fMRI

data from Haxby dataset.

Because fMRI data are 4D

(they consist of many 3D EPI images),

we cannot plot them directly using plot_epi

(which accepts just 3D input).

Here we are using mean_img to

extract a single 3D EPI image from the fMRI data.

Feature extraction: from fMRI volumes to a data matrix¶

These are some really lovely images, but for machine learning

we need matrices to work with the actual data. Fortunately, the

Decoder object we will use later on can

automatically transform Nifti images into matrices.

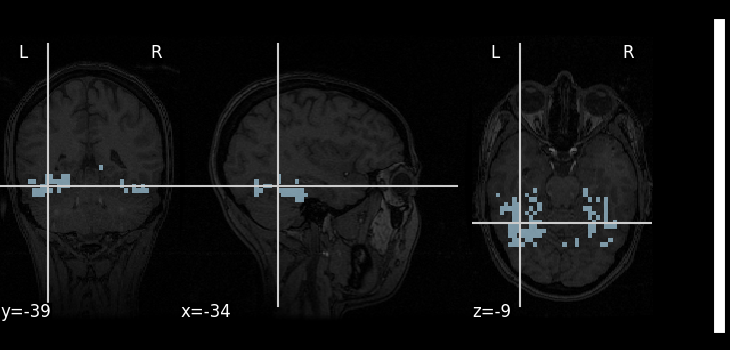

All we have to do for now is define a mask filename.

A mask of the Ventral Temporal (VT) cortex coming from the Haxby study is available:

mask_filename = haxby_dataset.mask_vt[0]

# Let's visualize it, using the subject's anatomical image as a

# background

plot_roi(mask_filename, bg_img=haxby_dataset.anat[0], cmap="Paired")

show()

Load the behavioral labels¶

Now that the brain images are converted to a data matrix, we can apply machine-learning to them, for instance to predict the task that the subject was doing. The behavioral labels are stored in a CSV file, separated by spaces.

We use pandas to load them in an array.

import pandas as pd

# Load behavioral information

behavioral = pd.read_csv(haxby_dataset.session_target[0], delimiter=" ")

print(behavioral)

labels chunks

0 rest 0

1 rest 0

2 rest 0

3 rest 0

4 rest 0

... ... ...

1447 rest 11

1448 rest 11

1449 rest 11

1450 rest 11

1451 rest 11

[1452 rows x 2 columns]

The task was a visual-recognition task, and the labels denote the experimental condition: the type of object that was presented to the subject. This is what we are going to try to predict.

conditions = behavioral["labels"]

print(conditions)

0 rest

1 rest

2 rest

3 rest

4 rest

...

1447 rest

1448 rest

1449 rest

1450 rest

1451 rest

Name: labels, Length: 1452, dtype: object

Restrict the analysis to cats and faces¶

As we can see from the targets above, the experiment contains many conditions. As a consequence, the data is quite big. Not all of this data has an interest to us for decoding, so we will keep only fMRI signals corresponding to faces or cats. We create a mask of the samples belonging to the condition; this mask is then applied to the fMRI data to restrict the classification to the face vs cat discrimination.

The input data will become much smaller (i.e. fMRI signal is shorter):

condition_mask = conditions.isin(["face", "cat"])

Because the data is in one single large 4D image, we need to use index_img to do the split easily.

from nilearn.image import index_img

fmri_niimgs = index_img(fmri_filename, condition_mask)

We apply the same mask to the targets

conditions = conditions[condition_mask]

conditions = conditions.to_numpy()

print(f"{conditions.shape=}")

conditions.shape=(216,)

Decoding with Support Vector Machine¶

As a decoder, we use a Support Vector Classifier with a linear kernel. We

first create it using by using Decoder.

from nilearn.decoding import Decoder

decoder = Decoder(

estimator="svc",

mask=mask_filename,

screening_percentile=100,

verbose=1,

)

Note

When viewing an Nilearn estimator in a notebook (or more generally on an HTML page like here) you get an expandable ‘Parameters’ section where the parameters that have different values from their default are highlighted in orange. If you are using a version of scikit-learn >= 1.8.0 you will also get access to the ‘docstring’ description of each parameter.

The decoder object is an object that can be fit (or trained) on data with labels, and then predict labels on data without.

We first fit it on the data.

Note

After fitting, the HTML representation of the estimator looks different than before before fitting.

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:172: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 10 out of 10 | elapsed: 0.7s finished

We can then predict the labels from the data

prediction = decoder.predict(fmri_niimgs)

print(f"{prediction=}")

prediction=array(['face', 'face', 'face', 'face', 'face', 'face', 'face', 'face',

'face', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'cat', 'face', 'face', 'face', 'face', 'face', 'face', 'face',

'face', 'face', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'cat', 'cat', 'face', 'face', 'face', 'face', 'face', 'face',

'face', 'face', 'face', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'cat', 'cat', 'cat', 'face', 'face', 'face', 'face', 'face',

'face', 'face', 'face', 'face', 'face', 'face', 'face', 'face',

'face', 'face', 'face', 'face', 'face', 'cat', 'cat', 'cat', 'cat',

'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'cat', 'cat', 'cat', 'cat', 'cat', 'face', 'face', 'face', 'face',

'face', 'face', 'face', 'face', 'face', 'face', 'face', 'face',

'face', 'face', 'face', 'face', 'face', 'face', 'cat', 'cat',

'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'face', 'face',

'face', 'face', 'face', 'face', 'face', 'face', 'face', 'cat',

'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'face',

'face', 'face', 'face', 'face', 'face', 'face', 'face', 'face',

'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'face', 'face', 'face', 'face', 'face', 'face', 'face', 'face',

'face', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'cat', 'face', 'face', 'face', 'face', 'face', 'face', 'face',

'face', 'face', 'face', 'face', 'face', 'face', 'face', 'face',

'face', 'face', 'face', 'cat', 'cat', 'cat', 'cat', 'cat', 'cat',

'cat', 'cat', 'cat'], dtype='<U4')

Note that for this classification task both classes contain the same number of samples (the problem is balanced). Then, we can use accuracy to measure the performance of the decoder. This is done by defining accuracy as the scoring. Let’s measure the prediction accuracy:

print((prediction == conditions).sum() / float(len(conditions)))

1.0

This prediction accuracy score is meaningless. Why?

Measuring prediction scores using cross-validation¶

The proper way to measure error rates or prediction accuracy is via cross-validation: leaving out some data and testing on it.

Manually leaving out data¶

Let’s leave out the 30 last data points during training, and test the prediction on these 30 last points:

fmri_niimgs_train = index_img(fmri_niimgs, slice(0, -30))

fmri_niimgs_test = index_img(fmri_niimgs, slice(-30, None))

conditions_train = conditions[:-30]

conditions_test = conditions[-30:]

decoder = Decoder(

estimator="svc",

mask=mask_filename,

screening_percentile=100,

verbose=1,

)

decoder.fit(fmri_niimgs_train, conditions_train)

prediction = decoder.predict(fmri_niimgs_test)

# The prediction accuracy is calculated on the test data: this is the accuracy

# of our model on examples it hasn't seen to examine how well the model perform

# in general.

predicton_accuracy = (prediction == conditions_test).sum() / float(

len(conditions_test)

)

print(f"Prediction Accuracy: {predicton_accuracy:.3f}")

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:213: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 10 out of 10 | elapsed: 0.5s finished

Prediction Accuracy: 0.767

Implementing a KFold loop¶

We can manually split the data in train and test set repetitively in a KFold strategy by importing scikit-learn’s object:

from sklearn.model_selection import KFold

cv = KFold(n_splits=5)

for fold, (train, test) in enumerate(cv.split(conditions), start=1):

decoder = Decoder(

estimator="svc",

mask=mask_filename,

screening_percentile=100,

verbose=1,

)

decoder.fit(index_img(fmri_niimgs, train), conditions[train])

prediction = decoder.predict(index_img(fmri_niimgs, test))

predicton_accuracy = (prediction == conditions[test]).sum() / float(

len(conditions[test])

)

print(

f"CV Fold {fold:01d} | Prediction Accuracy: {predicton_accuracy:.3f}"

)

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:243: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 10 out of 10 | elapsed: 0.3s finished

CV Fold 1 | Prediction Accuracy: 0.886

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:243: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 10 out of 10 | elapsed: 0.5s finished

CV Fold 2 | Prediction Accuracy: 0.767

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:243: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 10 out of 10 | elapsed: 0.5s finished

CV Fold 3 | Prediction Accuracy: 0.767

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:243: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 10 out of 10 | elapsed: 0.4s finished

CV Fold 4 | Prediction Accuracy: 0.698

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:243: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 10 out of 10 | elapsed: 0.4s finished

CV Fold 5 | Prediction Accuracy: 0.744

Cross-validation with the decoder¶

The decoder also implements a cross-validation loop by default and returns an array of shape (cross-validation parameters, n_folds). We can use accuracy score to measure its performance by defining accuracy as the scoring parameter.

n_folds = 5

decoder = Decoder(

estimator="svc",

mask=mask_filename,

cv=n_folds,

scoring="accuracy",

screening_percentile=100,

verbose=1,

)

decoder.fit(fmri_niimgs, conditions)

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:269: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 5 out of 5 | elapsed: 0.3s finished

Cross-validation pipeline can also be implemented manually. More details can be found on scikit-learn website.

Then we can check the best performing parameters per fold.

print(decoder.cv_params_["face"])

{'C': [100.0, 100.0, 100.0, 100.0, 100.0]}

Note

We can speed things up to use all the CPUs of our computer with the n_jobs parameter.

The best way to do cross-validation is to respect the structure of the experiment, for instance by leaving out full runs of acquisition.

The number of the run is stored in the CSV file giving the behavioral data. We have to apply our run mask, to select only cats and faces.

run_label = behavioral["chunks"][condition_mask]

The fMRI data is acquired by runs, and the noise is autocorrelated in a given run. Hence, it is better to predict across runs when doing cross-validation. To leave a run out, pass the cross-validator object to the cv parameter of decoder.

from sklearn.model_selection import LeaveOneGroupOut

cv = LeaveOneGroupOut()

decoder = Decoder(

estimator="svc",

mask=mask_filename,

cv=cv,

screening_percentile=100,

verbose=1,

)

decoder.fit(fmri_niimgs, conditions, groups=run_label)

print(f"{decoder.cv_scores_=}")

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:310: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[Decoder.fit] The decoding model will be trained on 464 features.

\[Decoder.fit] The decoding model will be trained on 464 features.

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 12 out of 12 | elapsed: 0.7s finished

decoder.cv_scores_={'cat': [1.0, 1.0, 1.0, 1.0, 0.9629629629629629, 0.8518518518518519, 0.9753086419753086, 0.40740740740740744, 0.9876543209876543, 1.0, 0.9259259259259259, 0.8765432098765432], 'face': [1.0, 1.0, 1.0, 1.0, 0.9629629629629629, 0.8518518518518519, 0.9753086419753086, 0.40740740740740744, 0.9876543209876543, 1.0, 0.9259259259259259, 0.8765432098765432]}

Inspecting the model weights¶

Finally, it may be useful to inspect and display the model weights.

Turning the weights into a nifti image¶

We retrieve the SVC discriminating weights

coef_ = decoder.coef_

print(f"{coef_=}")

coef_=array([[-3.89376205e-02, -1.87166866e-02, -3.23027681e-02,

-2.88746048e-02, 4.18696540e-02, 1.10744341e-02,

1.69997242e-02, -5.50952799e-02, -1.94204432e-02,

-3.51226470e-02, 1.08510949e-02, -1.28797568e-02,

-1.54678061e-02, -3.78907201e-02, -3.69168130e-02,

2.28088294e-02, 6.56420597e-03, -7.65753467e-03,

1.67105880e-02, -8.02151411e-03, 5.29515905e-02,

-8.17593997e-02, -6.36993757e-02, 2.41326414e-02,

4.59876870e-02, -2.22604122e-02, -1.77308734e-02,

2.22196424e-02, -9.53207928e-03, 5.76044865e-02,

2.14299589e-02, -9.14225975e-02, 4.03656530e-03,

-2.89275692e-02, -3.89030821e-02, -3.35114208e-02,

2.21403243e-03, 8.73129818e-03, -3.37416239e-02,

-2.41276816e-02, -6.81648242e-02, 1.65405389e-02,

2.70783909e-02, -6.56850697e-03, -1.21662871e-02,

5.47673985e-02, 8.13275549e-03, 3.60955492e-02,

-1.52762492e-02, 7.02911754e-02, 1.28099956e-03,

2.08007750e-02, -4.09935566e-03, 3.72429276e-02,

-3.77395037e-02, -1.03857300e-02, -2.38237757e-02,

-5.48879541e-02, 4.43027676e-02, -1.47419203e-01,

-2.34043401e-02, 1.87114244e-02, 6.65860055e-02,

-9.07602344e-02, -1.22035568e-02, -2.95651477e-03,

3.22093847e-02, -3.04054758e-02, 6.15345005e-02,

1.12249002e-02, 1.93776981e-02, -1.30542239e-02,

4.42975960e-02, -2.23065824e-02, 6.88147244e-02,

1.69389904e-02, 1.78947767e-02, 1.00276531e-02,

2.99186098e-02, -2.52172087e-02, 1.06155225e-02,

-6.31950882e-03, 2.21507766e-03, -2.23348938e-02,

1.42561165e-02, -1.53123895e-02, -1.98227183e-02,

-4.32638581e-02, -4.55125110e-02, 3.41589555e-02,

-2.79200154e-02, -2.80910237e-02, -3.70157627e-02,

-5.71450275e-02, -6.98950242e-02, 3.20163961e-03,

-8.35456480e-03, -3.37628681e-02, 3.04261590e-02,

8.68458874e-03, 6.19375462e-03, 5.94176083e-02,

9.07297616e-03, -1.48931287e-02, 1.43559863e-02,

-1.09027188e-02, 2.67698064e-02, 4.73786696e-02,

-2.96431337e-02, 3.09424787e-02, 1.57929360e-02,

-3.16721531e-02, -4.00105660e-02, -5.40261506e-02,

2.82613083e-02, -1.12101269e-02, -5.45402097e-02,

6.32177145e-02, -1.49997198e-02, 2.47542327e-03,

-4.56643857e-02, -1.83881575e-02, 1.19958071e-02,

-3.72171682e-02, -2.25509452e-03, 4.58656402e-02,

4.79165486e-02, 2.51817238e-03, -4.31721986e-02,

-5.35324130e-03, 5.76995178e-02, 7.40829723e-03,

-3.20590313e-02, 4.35708300e-03, 1.68303391e-02,

-2.92570229e-02, -2.24492569e-03, -8.30218383e-03,

-1.00011702e-02, 2.17137328e-02, -1.92619629e-03,

-1.33222214e-02, -2.80301258e-02, -1.75292690e-02,

-9.17801922e-03, -7.09943328e-03, -1.43031869e-02,

5.06832237e-02, -1.84812086e-02, -4.71507276e-02,

1.72570178e-02, -4.76642220e-02, -9.08879036e-04,

4.00770329e-02, 7.53992882e-02, 7.25620571e-03,

4.82606536e-02, 4.50556181e-02, 3.61201734e-02,

-8.16492629e-03, 1.95407025e-02, 3.57882724e-02,

4.89305122e-02, 3.82972464e-02, 6.23918137e-02,

6.13674795e-02, -1.68750576e-02, 1.66515188e-02,

3.35522261e-02, -1.80212340e-02, 4.46411292e-02,

-3.53244268e-02, -3.67293434e-02, -4.62244692e-03,

4.86829612e-02, 3.39667236e-02, 6.21707569e-03,

1.73613381e-02, 2.01698392e-02, 2.17098070e-02,

2.91413848e-02, 2.37776802e-02, 4.84699039e-02,

-9.22617691e-03, -2.82637577e-02, -2.13781456e-02,

1.80796177e-03, 4.79688071e-02, -9.78894826e-03,

1.11431063e-02, -1.65019137e-02, -2.89089807e-02,

2.42850246e-02, -1.22346081e-02, -2.92869565e-02,

-2.89846958e-02, -3.39531197e-02, -3.65290649e-03,

2.65324390e-02, 4.58043204e-02, -5.93381988e-02,

-2.13630348e-02, -3.09405327e-02, 5.50179240e-02,

-3.38816109e-02, 6.12616440e-03, 1.41484174e-02,

1.10215804e-02, 5.33811907e-02, -2.12339146e-02,

6.37421802e-03, -1.13075941e-02, -2.64225989e-02,

-2.22400628e-02, -5.31919939e-02, -3.98651925e-02,

-1.29727768e-01, -3.28093249e-02, -2.89711896e-02,

-9.13468828e-03, -7.28738250e-03, -3.71054012e-02,

-6.34907298e-02, 2.04377795e-03, -8.26794898e-02,

-6.71214743e-02, -2.29119603e-03, -2.33451988e-02,

1.77914720e-02, -8.74666201e-02, -2.76487421e-03,

-4.38275763e-02, -1.28052208e-02, 2.78033938e-02,

-4.32695003e-02, -3.22688604e-02, -2.28030092e-02,

-2.57413470e-02, 2.03623602e-02, -9.90248901e-03,

-3.15033089e-02, -1.81420070e-02, -1.12327799e-03,

-4.17432079e-02, -6.23480657e-02, 2.54536638e-04,

-6.73680356e-02, 6.53966919e-02, 1.06521502e-02,

2.21983160e-02, -1.98727615e-02, -1.85518110e-02,

4.05703339e-02, -3.02838021e-02, -8.10051673e-02,

-7.42458099e-02, -4.93849682e-02, -1.01771495e-02,

1.09406597e-02, -4.49254011e-02, 2.92747369e-02,

7.05292407e-03, 5.07539151e-03, -4.84047807e-03,

2.48754248e-03, 3.00654339e-02, -2.63075353e-03,

4.64686490e-03, 7.90210100e-02, 1.04858959e-02,

1.68080626e-02, -4.36719875e-02, -1.08856253e-02,

2.10241054e-02, -4.41965186e-02, 3.16496394e-03,

6.98669385e-02, 8.61631233e-02, 4.96234043e-02,

6.03913949e-03, 5.56493802e-02, -2.98920239e-02,

4.13027557e-03, -3.21952736e-02, -3.14991708e-02,

-5.31276763e-02, 2.67257184e-02, 3.14428083e-02,

6.67112141e-03, -1.28703256e-02, 2.20151231e-02,

5.68525047e-02, 2.25603831e-02, -2.04616492e-02,

5.10339213e-03, 2.85356406e-02, -1.81663842e-02,

-8.48418699e-03, -3.18825105e-02, -1.18497662e-02,

-4.10846137e-02, 3.11776385e-02, 9.63470438e-03,

-8.25919523e-03, -3.12228561e-02, 8.57656985e-03,

-9.70205130e-03, 1.32375062e-02, 4.06447863e-02,

8.23421167e-03, -3.27356138e-02, -4.33875051e-03,

-1.75530557e-02, 6.88852651e-03, 3.45131317e-02,

7.03299521e-02, 2.16785512e-02, 5.32230236e-03,

8.17564850e-02, 6.40062454e-02, -2.31141150e-03,

-1.17558267e-02, 1.75889189e-01, 3.18128310e-02,

-3.15886978e-02, 3.34028540e-02, 2.22780436e-02,

1.00232195e-02, -4.74911765e-02, -2.12756869e-02,

-3.98717950e-02, -6.04069522e-02, -4.65060488e-02,

1.03003928e-02, -3.05681245e-04, 1.80743734e-02,

-1.75451390e-02, -8.72590148e-02, 1.00662626e-01,

4.46119755e-03, 7.46870515e-02, -6.13410511e-02,

2.81703420e-02, -1.40979350e-02, 3.14638182e-02,

-1.63834909e-02, 3.66532337e-02, -5.15685795e-03,

1.45093792e-02, 6.35867705e-02, 2.34597958e-02,

8.81062621e-02, 6.15344247e-02, -1.39361115e-02,

2.07247368e-02, -3.15463695e-03, 5.15425318e-02,

-2.88767519e-02, 1.60263983e-02, 2.09701896e-02,

-3.29172576e-02, -2.59460439e-02, -5.60399838e-02,

-3.64627796e-02, 1.12882972e-02, 2.17267673e-02,

-1.51637851e-02, -7.82891131e-03, 2.42548949e-02,

9.47012886e-02, -2.63033295e-02, 1.17313282e-04,

-5.24170836e-03, 4.17988207e-02, 8.85680988e-02,

6.23645776e-03, 1.86600486e-02, 1.54629291e-02,

3.50541277e-03, 6.20612707e-03, -1.19790103e-02,

1.59526817e-02, 7.12123584e-03, -8.93193451e-02,

-3.54348202e-03, 1.23477728e-02, 3.03927582e-02,

-2.37295503e-02, -3.82792179e-02, -4.98745752e-02,

4.66896243e-02, -1.23292370e-02, -1.10333086e-02,

2.18105488e-02, 2.18719891e-02, 2.63538953e-02,

1.05280744e-02, 1.84617512e-02, 8.36183441e-04,

-6.65217245e-03, 3.49396007e-02, 1.49354106e-02,

-1.11597484e-02, 6.69095614e-03, -2.00059189e-02,

-3.99014380e-02, 3.01872773e-02, -1.09866792e-02,

-4.11780664e-02, 2.72052189e-02, 1.16425192e-02,

-1.55502815e-02, 3.27701592e-02, 3.95493964e-02,

8.48714620e-03, 2.19934968e-02, -9.88670457e-03,

-3.61420421e-02, -4.77020951e-02, 1.90074089e-02,

-5.58287182e-02, -3.31738290e-02, -2.24913678e-02,

-3.36175651e-02, -4.07354851e-02, 1.08860472e-02,

1.12810528e-02, 7.63144418e-02, 4.04802771e-03,

3.07014708e-02, 2.89177514e-02, 4.71618208e-03,

5.13383443e-02, -4.10364195e-02, 1.23260032e-03,

-2.50403651e-02, 5.85905083e-02, -1.04965660e-01,

-4.41705602e-02, 1.18520515e-02, -5.83203655e-02,

-4.82244297e-02, 9.17661328e-03, 1.03260034e-02,

-5.09177853e-03, -3.23390432e-02, -3.19387346e-02,

-1.53770828e-02, -5.21212044e-02, 1.55620006e-02,

2.93484139e-02, -1.92527726e-02, 1.76694119e-02,

2.67991691e-02, 5.76553825e-02, -1.38163896e-02,

2.60399892e-02, 1.50399988e-02, 1.27424374e-02,

-2.29243937e-02, -1.06664389e-02, 9.81943393e-03,

-4.77512298e-02, 1.64242767e-02]])

It’s a numpy array with only one coefficient per voxel:

print(f"{coef_.shape=}")

coef_.shape=(1, 464)

To get the Nifti image of these coefficients, we only need retrieve the coef_img_ in the decoder and select the class

coef_img = decoder.coef_img_["face"]

coef_img is now a NiftiImage. We can save the coefficients as a nii.gz file:

from pathlib import Path

output_dir = Path.cwd() / "results" / "plot_decoding_tutorial"

output_dir.mkdir(exist_ok=True, parents=True)

print(f"Output will be saved to: {output_dir}")

decoder.coef_img_["face"].to_filename(output_dir / "haxby_svc_weights.nii.gz")

Output will be saved to: /home/runner/work/nilearn/nilearn/examples/00_tutorials/results/plot_decoding_tutorial

Plotting the SVM weights¶

We can plot the weights, using the subject’s anatomical as a background

from nilearn.plotting import view_img

view_img(

decoder.coef_img_["face"],

bg_img=haxby_dataset.anat[0],

title="SVM weights",

dim=-1,

)

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/numpy/core/fromnumeric.py:771: UserWarning:

Warning: 'partition' will ignore the 'mask' of the MaskedArray.

/home/runner/work/nilearn/nilearn/examples/00_tutorials/plot_decoding_tutorial.py:353: UserWarning:

Casting data from int16 to float32