Note

This page is a reference documentation. It only explains the function signature, and not how to use it. Please refer to the user guide for the big picture.

nilearn.datasets.fetch_localizer_contrasts¶

- nilearn.datasets.fetch_localizer_contrasts(contrasts, n_subjects=None, get_tmaps=False, get_masks=False, get_anats=False, data_dir=None, resume=True, verbose=1)[source]¶

Download and load Brainomics/Localizer dataset (94 subjects).

“The Functional Localizer is a simple and fast acquisition procedure based on a 5-minute functional magnetic resonance imaging (fMRI) sequence that can be run as easily and as systematically as an anatomical scan. This protocol captures the cerebral bases of auditory and visual perception, motor actions, reading, language comprehension and mental calculation at an individual level. Individual functional maps are reliable and quite precise. The procedure is described in more detail on the Functional Localizer page.” (see https://osf.io/vhtf6/)

You may cite Papadopoulos Orfanos et al.[1] when using this dataset.

Scientific results obtained using this dataset are described in Pinel et al.[2].

- Parameters:

- contrasts

listofstr The contrasts to be fetched (for all 94 subjects available). Allowed values are:

- "checkerboard" - "horizontal checkerboard" - "vertical checkerboard" - "horizontal vs vertical checkerboard" - "vertical vs horizontal checkerboard" - "sentence listening" - "sentence reading" - "sentence listening and reading" - "sentence reading vs checkerboard" - "calculation (auditory cue)" - "calculation (visual cue)" - "calculation (auditory and visual cue)" - "calculation (auditory cue) vs sentence listening" - "calculation (visual cue) vs sentence reading" - "calculation vs sentences" - "calculation (auditory cue) and sentence listening" - "calculation (visual cue) and sentence reading" - "calculation and sentence listening/reading" - "calculation (auditory cue) and sentence listening vs " - "calculation (visual cue) and sentence reading" - "calculation (visual cue) and sentence reading vs checkerboard" - "calculation and sentence listening/reading vs button press" - "left button press (auditory cue)" - "left button press (visual cue)" - "left button press" - "left vs right button press" - "right button press (auditory cue)" - "right button press (visual cue)" - "right button press" - "right vs left button press" - "button press (auditory cue) vs sentence listening" - "button press (visual cue) vs sentence reading" - "button press vs calculation and sentence listening/reading"

or equivalently on can use the original names:

- "checkerboard" - "horizontal checkerboard" - "vertical checkerboard" - "horizontal vs vertical checkerboard" - "vertical vs horizontal checkerboard" - "auditory sentences" - "visual sentences" - "auditory&visual sentences" - "visual sentences vs checkerboard" - "auditory calculation" - "visual calculation" - "auditory&visual calculation" - "auditory calculation vs auditory sentences" - "visual calculation vs sentences" - "auditory&visual calculation vs sentences" - "auditory processing" - "visual processing" - "visual processing vs auditory processing" - "auditory processing vs visual processing" - "visual processing vs checkerboard" - "cognitive processing vs motor" - "left auditory click" - "left visual click" - "left auditory&visual click" - "left auditory & visual click vs right auditory&visual click" - "right auditory click" - "right visual click" - "right auditory&visual click" - "right auditory & visual click vs left auditory&visual click" - "auditory click vs auditory sentences" - "visual click vs visual sentences" - "auditory&visual motor vs cognitive processing"

- n_subjects

intorlistor None, default=None The number or list of subjects to load. If None is given, all 94 subjects are used.

- get_tmaps

bool, default=False Whether t maps should be fetched or not.

- get_masks

bool, default=False Whether individual masks should be fetched or not.

- get_anats

bool, default=False Whether individual structural images should be fetched or not.

- data_dir

pathlib.Pathorstror None, optional Path where data should be downloaded. By default, files are downloaded in a

nilearn_datafolder in the home directory of the user. See alsonilearn.datasets.utils.get_data_dirs.- resume

bool, default=True Whether to resume download of a partly-downloaded file.

- verbose

boolorint, default=1 Verbosity level (

0orFalsemeans no message).

- contrasts

- Returns:

- dataBunch

Dictionary-like object, the interest attributes are :

See also

Notes

If the dataset files are already present in the user’s Nilearn data directory, this fetcher will not re-download them. To force a fresh download, you can remove the existing dataset folder from your local Nilearn data directory.

For more details on how Nilearn stores datasets.

References

Examples using nilearn.datasets.fetch_localizer_contrasts¶

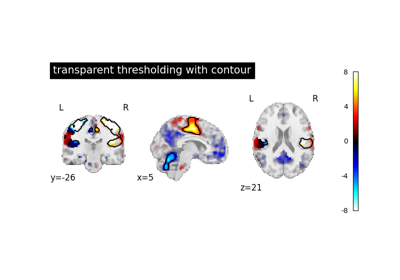

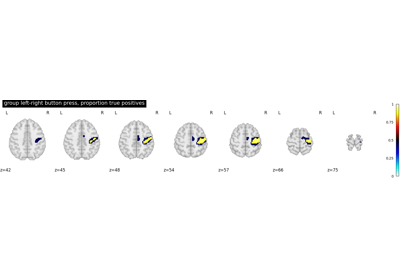

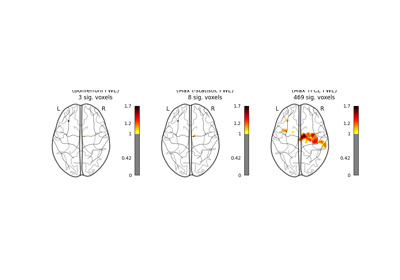

Second-level fMRI model: true positive proportion in clusters

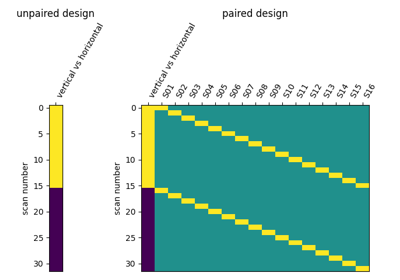

Second-level fMRI model: two-sample test, unpaired and paired

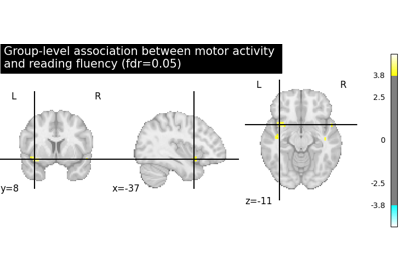

Massively univariate analysis of a motor task from the Localizer dataset