Note

This page is a reference documentation. It only explains the function signature, and not how to use it. Please refer to the user guide for the big picture.

nilearn.image.high_variance_confounds¶

- nilearn.image.high_variance_confounds(imgs, n_confounds=5, percentile=2.0, detrend=True, mask_img=None)[source]¶

Return confounds extracted from input signals with highest variance.

- Parameters:

- imgs4D Niimg-like or 2D SurfaceImage object

- mask_imgNiimg-like or SurfaceImage object, or None, default=None

If not provided, all voxels / vertices are used. If provided, confounds are extracted from voxels / vertices inside the mask. See Input and output: neuroimaging data representation.

- n_confounds

int, default=5 Number of confounds to return.

- percentile

float, default=2.0 Highest-variance signals percentile to keep before computing the singular value decomposition, 0. <= percentile <= 100. mask_img.sum() * percentile / 100 must be greater than n_confounds.

- detrend

bool, default=True If True, detrend signals before processing.

- Returns:

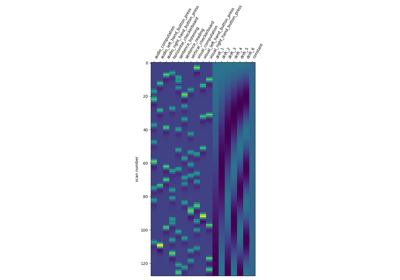

numpy.ndarrayHighest variance confounds. Shape: (number_of_scans, n_confounds).

Notes

This method is related to what has been published in the literature as ‘CompCor’ (Behzadi NeuroImage 2007).

The implemented algorithm does the following:

Computes the sum of squares for each signal (no mean removal).

Keeps a given percentile of signals with highest variance (percentile).

Computes an SVD of the extracted signals.

Returns a given number (n_confounds) of signals from the SVD with highest singular values.