Note

Go to the end to download the full example code. or to run this example in your browser via Binder

Advanced decoding using scikit learn¶

This tutorial opens the box of decoding pipelines to bridge integrated

functionalities provided by the Decoder object

with more advanced usecases. It reproduces basic examples functionalities with

direct calls to scikit-learn function and gives pointers to more advanced

objects. If some concepts seem unclear,

please refer to the documentation on decoding

and in particular to the advanced section.

As in many other examples, we perform decoding of the visual category of a

stimuli on Haxby et al.[1] dataset,

focusing on distinguishing two categories:

face and cat images.

Retrieve and load the fMRI data from the Haxby study¶

First download the data¶

# The :func:`~nilearn.datasets.fetch_haxby` function will download the

# Haxby dataset composed of fMRI images in a Niimg,

# a spatial mask and a text document with label of each image

from nilearn import datasets

haxby_dataset = datasets.fetch_haxby()

mask_filename = haxby_dataset.mask_vt[0]

fmri_filename = haxby_dataset.func[0]

# Loading the behavioral labels

import pandas as pd

behavioral = pd.read_csv(haxby_dataset.session_target[0], delimiter=" ")

behavioral

[fetch_haxby] Dataset found in /home/runner/nilearn_data/haxby2001

We keep only a images from a pair of conditions(cats versus faces).

from nilearn.image import index_img

conditions = behavioral["labels"]

condition_mask = conditions.isin(["face", "cat"])

fmri_niimgs = index_img(fmri_filename, condition_mask)

conditions = conditions[condition_mask]

conditions = conditions.to_numpy()

run_label = behavioral["chunks"][condition_mask]

Performing decoding with scikit-learn¶

# Importing a classifier

# ......................

# We can import many predictive models from scikit-learn that can be used in a

# decoding pipelines. They are all used with the same `fit()` and `predict()`

# functions.

# Let's define a Support Vector Classifier

# (or :sklearn:`SVC <modules/svm.html>`).

from sklearn.svm import SVC

svc = SVC()

Masking the data¶

To use a scikit-learn estimator on brain images, you should first mask the

data using a NiftiMasker to extract only the

voxels inside the mask of interest,

and transform 4D input fMRI data to 2D arrays

(shape=(n_timepoints, n_voxels)) that estimators can work on.

from nilearn.maskers import NiftiMasker

masker = NiftiMasker(

mask_img=mask_filename,

runs=run_label,

smoothing_fwhm=4,

memory="nilearn_cache",

memory_level=1,

verbose=1,

)

fmri_masked = masker.fit_transform(fmri_niimgs)

\[NiftiMasker.wrapped] Loading mask from

'/home/runner/nilearn_data/haxby2001/subj2/mask4_vt.nii.gz'

\[NiftiMasker.wrapped] Loading data from <nibabel.nifti1.Nifti1Image object at

0x7fdfb794d9c0>

/home/runner/work/nilearn/nilearn/examples/07_advanced/plot_advanced_decoding_scikit.py:87: UserWarning:

[NiftiMasker.fit] Generation of a mask has been requested (imgs != None) while a mask was given at masker creation. Given mask will be used.

\[NiftiMasker.wrapped] Resampling mask

________________________________________________________________________________

[Memory] Calling nilearn.image.resampling.resample_img...

resample_img(<nibabel.nifti1.Nifti1Image object at 0x7fdfb4434340>, target_affine=None, target_shape=None, copy=False, interpolation='nearest')

_____________________________________________________resample_img - 0.0s, 0.0min

\[NiftiMasker.wrapped] Finished fit

/home/runner/work/nilearn/nilearn/examples/07_advanced/plot_advanced_decoding_scikit.py:87: FutureWarning:

boolean values for 'standardize' will be deprecated in nilearn 0.15.0.

Use 'zscore_sample' instead of 'True' or use 'None' instead of 'False'.

________________________________________________________________________________

[Memory] Calling nilearn.maskers.nifti_masker.filter_and_mask...

filter_and_mask(<nibabel.nifti1.Nifti1Image object at 0x7fdfb794d9c0>, <nibabel.nifti1.Nifti1Image object at 0x7fdfb4434340>, { 'clean_args': None,

'clean_kwargs': {},

'cmap': 'gray',

'detrend': False,

'dtype': None,

'high_pass': None,

'high_variance_confounds': False,

'low_pass': None,

'reports': True,

'runs': 21 0

22 0

23 0

24 0

25 0

..

1427 11

1428 11

1429 11

1430 11

1431 11

Name: chunks, Length: 216, dtype: int64,

'smoothing_fwhm': 4,

'standardize': False,

'standardize_confounds': True,

't_r': None,

'target_affine': None,

'target_shape': None}, memory_level=1, memory=Memory(location=nilearn_cache/joblib), verbose=1, confounds=None, sample_mask=None, copy=True, dtype=None, sklearn_output_config=None)

\[NiftiMasker.wrapped] Loading data from <nibabel.nifti1.Nifti1Image object at

0x7fdfb794d9c0>

\[NiftiMasker.wrapped] Smoothing images

\[NiftiMasker.wrapped] Extracting region signals

\[NiftiMasker.wrapped] Cleaning extracted signals

__________________________________________________filter_and_mask - 1.3s, 0.0min

Cross-validation with scikit-learn¶

To train and test the model in a meaningful way we use cross-validation with

the function sklearn.model_selection.cross_val_score that computes

for you the score for the different folds of cross-validation.

from sklearn.model_selection import cross_val_score

# Here `cv=5` stipulates a 5-fold cross-validation

cv_scores = cross_val_score(svc, fmri_masked, conditions, cv=5)

print(f"SVC accuracy: {cv_scores.mean():.3f}")

SVC accuracy: 0.500

Tuning cross-validation parameters¶

You can change many parameters of the cross_validation here, for example:

use a different cross - validation scheme, for example LeaveOneGroupOut()

speed up the computation by using n_jobs = -1, which will spread the computation equally across all processors.

use a different scoring function, as a keyword or imported from scikit-learn : scoring = ‘roc_auc’

from sklearn.model_selection import LeaveOneGroupOut

cv = LeaveOneGroupOut()

cv_scores = cross_val_score(

svc,

fmri_masked,

conditions,

cv=cv,

scoring="roc_auc",

groups=run_label,

n_jobs=2,

)

print(f"SVC accuracy (tuned parameters): {cv_scores.mean():.3f}")

SVC accuracy (tuned parameters): 0.642

Measuring the chance level¶

sklearn.dummy.DummyClassifier (purely random) estimators are the

simplest way to measure prediction performance at chance. A more controlled

way, but slower, is to do permutation testing on the labels, with

sklearn.model_selection.permutation_test_score.

Dummy estimator¶

from sklearn.dummy import DummyClassifier

null_cv_scores = cross_val_score(

DummyClassifier(), fmri_masked, conditions, cv=cv, groups=run_label

)

print(f"Dummy accuracy: {null_cv_scores.mean():.3f}")

Dummy accuracy: 0.500

Permutation test¶

from sklearn.model_selection import permutation_test_score

null_cv_scores = permutation_test_score(

svc, fmri_masked, conditions, cv=cv, groups=run_label

)[1]

print(f"Permutation test score: {null_cv_scores.mean():.3f}")

Permutation test score: 0.496

Decoding without a mask: Anova-SVM in scikit-lean¶

We can also implement feature selection before decoding as a scikit-learn

pipeline`(:class:`sklearn.pipeline.Pipeline). For this, we need to import

the sklearn.feature_selection module and use

sklearn.feature_selection.f_classif, a simple F-score

based feature selection (a.k.a.

Anova).

from sklearn.feature_selection import SelectPercentile, f_classif

from sklearn.pipeline import Pipeline

from sklearn.svm import LinearSVC

feature_selection = SelectPercentile(f_classif, percentile=10)

linear_svc = LinearSVC(dual=True, random_state=0)

anova_svc = Pipeline([("anova", feature_selection), ("svc", linear_svc)])

# We can use our ``anova_svc`` object exactly as we were using our ``svc``

# object previously.

# As we want to investigate our model, we use sklearn `cross_validate` function

# with `return_estimator = True` instead of cross_val_score,

# to save the estimator

from sklearn.model_selection import cross_validate

fitted_pipeline = cross_validate(

anova_svc,

fmri_masked,

conditions,

cv=cv,

groups=run_label,

return_estimator=True,

)

print(f"ANOVA+SVC test score: {fitted_pipeline['test_score'].mean():.3f}")

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

/home/runner/work/nilearn/nilearn/.tox/doc/lib/python3.10/site-packages/sklearn/svm/_base.py:1235: ConvergenceWarning:

Liblinear failed to converge, increase the number of iterations.

ANOVA+SVC test score: 0.546

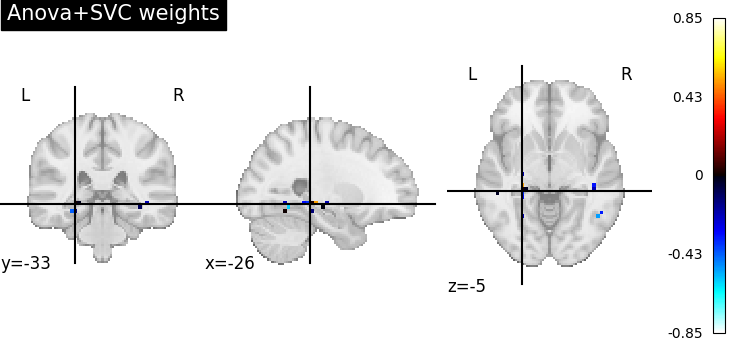

Visualize the ANOVA + SVC’s discriminating weights¶

# retrieve the pipeline fitted on the first cross-validation fold and its SVC

# coefficients

first_pipeline = fitted_pipeline["estimator"][0]

svc_coef = first_pipeline.named_steps["svc"].coef_

print(

"After feature selection, "

f"the SVC is trained only on {svc_coef.shape[1]} features"

)

# We invert the feature selection step to put these coefs in the right 2D place

full_coef = first_pipeline.named_steps["anova"].inverse_transform(svc_coef)

print(

"After inverting feature selection, "

f"we have {full_coef.shape[1]} features back"

)

# We apply the inverse of masking on these to make a 4D image that we can plot

from nilearn.plotting import plot_stat_map, show

weight_img = masker.inverse_transform(full_coef)

plot_stat_map(weight_img, title="Anova+SVC weights")

show()

After feature selection, the SVC is trained only on 47 features

After inverting feature selection, we have 464 features back

\[NiftiMasker.inverse_transform] Computing image from signals

________________________________________________________________________________

[Memory] Calling nilearn.masking.unmask...

unmask(array([[0., ..., 0.]]), <nibabel.nifti1.Nifti1Image object at 0x7fdfb4434340>)

___________________________________________________________unmask - 0.0s, 0.0min

Going further with scikit-learn¶

Changing the prediction engine¶

To change the prediction engine, we just need to import it and use in our pipeline instead of the SVC. We can try Fisher’s Linear Discriminant Analysis (LDA)

# Construct the new estimator object and use it in a new pipeline after anova

from sklearn.discriminant_analysis import LinearDiscriminantAnalysis

feature_selection = SelectPercentile(f_classif, percentile=10)

lda = LinearDiscriminantAnalysis()

anova_lda = Pipeline([("anova", feature_selection), ("LDA", lda)])

# Recompute the cross-validation score:

import numpy as np

cv_scores = cross_val_score(

anova_lda, fmri_masked, conditions, cv=cv, groups=run_label

)

classification_accuracy = np.mean(cv_scores)

n_conditions = len(set(conditions)) # number of target classes

print(

f"ANOVA + LDA classification accuracy: {classification_accuracy:.4f} "

f"/ Chance Level: {1.0 / n_conditions:.4f}"

)

ANOVA + LDA classification accuracy: 0.7083 / Chance Level: 0.5000

Changing the feature selection¶

Let’s say that you want a more sophisticated feature selection, for example a Recursive Feature Elimination(RFE) before a svc. We follows the same principle.

# Import it and define your fancy objects

from sklearn.feature_selection import RFE

svc = SVC()

rfe = RFE(SVC(kernel="linear", C=1.0), n_features_to_select=50, step=0.25)

# Create a new pipeline, composing the two classifiers `rfe` and `svc`

rfe_svc = Pipeline([("rfe", rfe), ("svc", svc)])

# Recompute the cross-validation score

# cv_scores = cross_val_score(rfe_svc,

# fmri_masked,

# target,

# cv=cv,

# n_jobs=2,

# verbose=1)

# But, be aware that this can take * A WHILE * ...

References¶

Total running time of the script: (0 minutes 18.674 seconds)

Estimated memory usage: 1018 MB